When we talk about asynchronous programming, the first thing we need to do is get to know a series of terms and “buzzwords” like asynchrony, concurrency, parallelism.

I had already said that the world of asynchrony is complicated, I won’t lie to you. In fact, the topic could easily have its own course. Which, logically, is not the purpose of this article.

But, as a programmer, there are certain terms that should sound familiar. And I’ll tell you right now that they are very related words, and we could even say they are a bit mixed up and tangled with each other.

Don’t worry, I plan to be a bit pedantic about the differences between them. In the end, they are just words. But what is important is to know the concepts and what each one of them is, even if briefly.

So let’s start with the most basic, the synchronous process 👇

Synchronous and Blocking Process

A synchronous and blocking process is one that executes sequentially, waiting for each operation to finish before starting the next one.

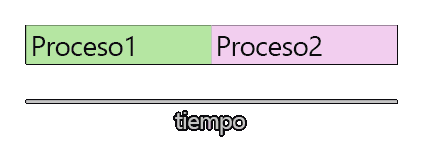

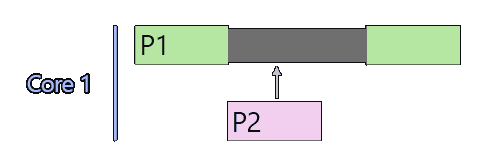

That is, if within your program you had two tasks, “process 1” and “process 2”. Process 2 would wait for process 1 to finish, and would start immediately after its completion.

In this case, the processes are synchronous because they start one after the other. Furthermore, process 1 is blocking because process 2 cannot start until its execution finishes.

This type of process is the first one you will learn. It’s the simplest to understand and program. The “old-fashioned” one, so to speak. Here there is no asynchrony, no concurrency, nothing at all.

Concurrency

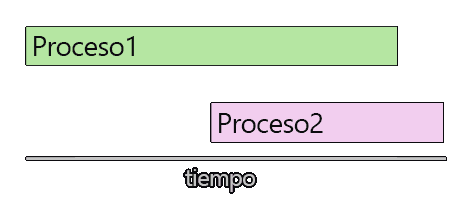

Concurrency refers to a system’s ability to execute multiple tasks that overlap in time. That is, they concur in time (hence its name).

By definition, two tasks are concurrent if one of them starts between the start and end of the other. For example, like this:

By saying that two tasks are concurrent, we haven’t said anything about “how” that concurrency will be, nor how they will achieve it, nor anything at all.

We are simply saying that for a period of time, both are executed. That is, literally we’ve only said they concur temporarily.

Parallelism and Semi-Parallelism

Now we get to parallelism and semi-parallelism. Here we are indeed talking about a specific way to achieve concurrency, through the simultaneous execution of multiple processes.

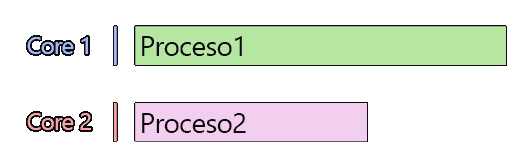

The first thing to remember is that, in general, a single-core processor can only execute one process simultaneously. That’s how they are built and that’s how they work.

In the case that our processor has several cores, we can indeed perform parallelism. This means that each core can be in charge of executing one of the processes.

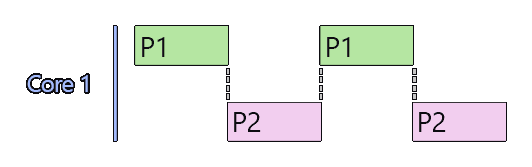

However, even when our processor does not have multiple cores to execute processes in parallel, we can still emulate it with semi-parallelism.

Basically, the processor switches from task to task, dedicating time to each of them. The Operating System is in charge of this time allocation and task management.

In this way, an illusion is created that both are executing simultaneously.

Logically, parallelism reduces overall processing times. If you have two cores (let’s imagine they are equally powerful as if there was only one), the processing time is going to be smaller.

But even in the case of semi-parallelism, with a single core, processing times can be reduced. This is because processes often have waits.

In that case, process 1 has a wait. Semi-parallelism allows the processor to insert process 2 (or a part of it) in between. So, you gain that.

Asynchrony

Finally, we arrive at asynchrony. An asynchronous process is a process that is not synchronous (and I’m feeling quite smug). As we see, it’s a term that is too broad.

Concurrency has to do with asynchrony, and parallelism, and semi-parallelism. Everything is more or less related and everything is asynchrony in the final analysis.

But, in general, we usually call a process “asynchronous” when we have a process that involves a very long wait or blockage. For example, waiting for a user to press a key, for a file to be read, or to receive a communication.

So that these processes do not block the flow of the main program, they are launched with a concurrency mechanism so they are non-blocking. Then it is usually said that they have been launched asynchronously.

Formal Definition

Now that we have seen the different terms, let’s go for a slightly more rigorous definition of what each one of them is.

Asynchrony

Execution model in which operations do not block the program flow and can be executed in a non-sequential manner.

Concurrency

The ability of a system to manage multiple tasks that overlap (concur) in time.

Parallelism

Execution of multiple processes on different cores of a processor, allowing true simultaneous processing.

Semi-Parallelism

“Simulated” parallelism within the same core, performed by activating and pausing tasks, and dedicating processor time to them alternately.

As we have seen, these are terms that are more or less simple, but are related (and mixed up with each other). As I said, more important than the words and the formal definition, what’s important is to know what they are and understand how they work.